Robots are beginning to enter one of the most personal spaces in our lives: the home. As people grow older, the pace and rhythm of daily life often shift. Tasks that once felt effortless can become genuine challenges.

Carrying a glass of water. Remembering to take medication. Setting the table for dinner. These may seem like small actions, but they are deeply meaningful. They help preserve independence and dignity in everyday life.

For years, engineers have worked toward robots that can help with those everyday moments. The goal is to create practical helpers that move safely through kitchens and living rooms, and the possibility is starting to feel more real.

Researchers at Universidad Carlos III de Madrid (UC3M) have developed a new way for a robot to coordinate both of its arms smoothly and on its own. They presented the work at IROS 2025, one of the world’s largest robotics conferences.

Their system pushes service robots toward more natural movement and simpler training for everyday tasks at home.

When robot arms collide

Getting a robot to move one arm smoothly is already a challenge. Getting two arms to work together without knocking into each other is a whole different story. People manage it without even noticing. Robots do not.

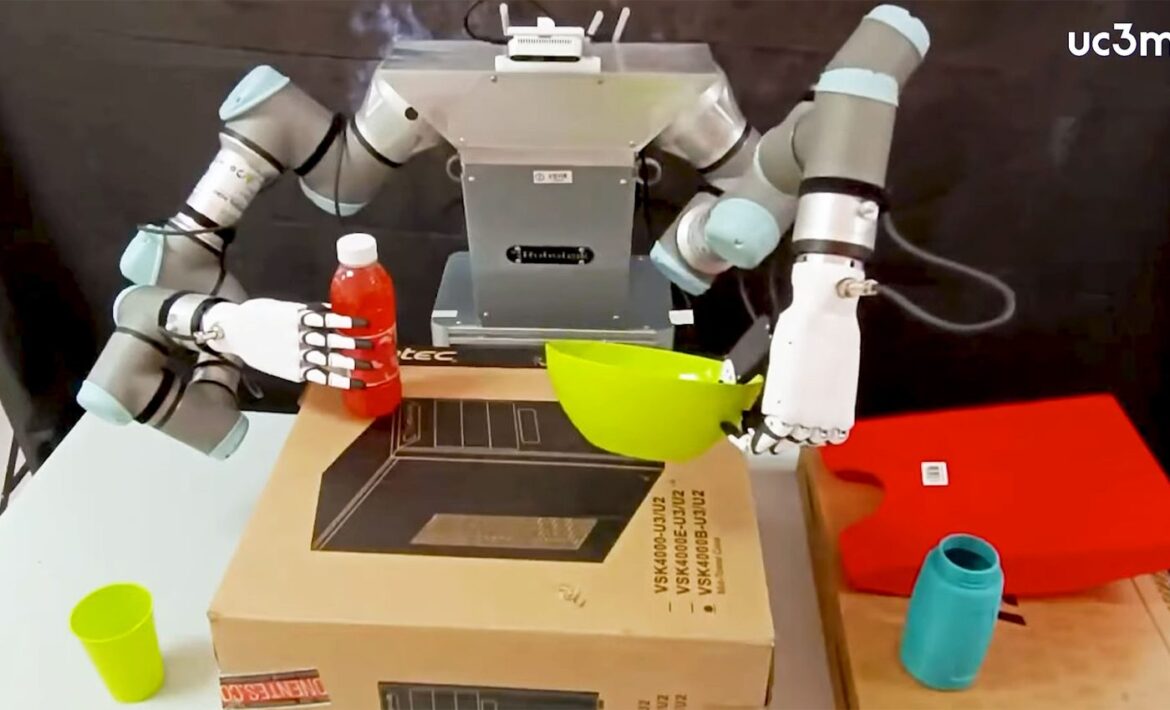

The team tackled this challenge using a robot called ADAM, short for Autonomous Domestic Ambidextrous Manipulator. ADAM already performs assistive tasks in home-like settings.

“It can set the table and clear it afterwards, tidy the kitchen, or bring a user a glass of water or medication at the indicated time,” said Alicia Mora, one of the researchers in the Mobile Robots Group at the UC3M Robotics Lab.

“It can also help them when they are going out by bringing a coat or an article of clothing.”

ADAM was built with older adults in mind. “We all know people for whom simple gestures, such as someone bringing them a glass of water with a pill or setting the table for them, represent a very significant help,” said Ramón Barber, director of the Mobile Robots Group at UC3M.

Robots learn by watching

In the past, programming a robot meant writing endless lines of code to spell out every joint angle and every motion. It was slow and rigid. If anything changed, the robot failed.

The new approach borrows from how people learn. It uses imitation learning. A person shows the robot how to do a task, either by guiding its arm directly or by performing the action while sensors record it. The robot studies the movement and builds a model of it.

But copying is not enough. If a bottle shifts a few inches on a table, a robot that only repeats a recorded motion will miss it. Real life is messy. Objects move. People move.

Adaptive learning breakthrough

To solve this, the researchers combined imitation learning with a mathematical method called Gaussian Belief Propagation.

Each arm learns its job separately. Then the two arms share information through this mathematical system. It acts like a constant internal conversation, allowing them to adjust in real time.

They avoid collisions with each other and with nearby objects, and they do not need to stop and replan from scratch.

The result is movement that adapts smoothly when conditions change. The researchers describe the learned motion as behaving like a rubber band.

If the target shifts, the path stretches and reshapes while keeping the core features of the action. If the robot pours water, it keeps the bottle upright to avoid spills, even if the cup sits in a slightly different spot.

In a paper presented at IROS 2025 by researchers Adrián Prados and Gonzalo Espinoza, the team showed that this method worked in both simulations and real domestic robots.

“The ultimate goal is for robots to stop being simple movement recorders and become authentic coworkers, capable of perceiving their environment, anticipating actions, and collaborating safely in human spaces,” said Prados.

Inside robot decision-making

Under the hood, ADAM follows a clear cycle: perception, reasoning, and action.

First, it senses the world. It uses 2D and 3D laser sensors to measure distances, detect obstacles, and locate objects. It also relies on RGB cameras with depth information to build three-dimensional models of its surroundings.

Next comes reasoning. The robot processes the data and extracts what matters. It identifies objects and evaluates the situation. Then it acts. It may move its base, coordinate both arms, or execute a specific task like picking up a cup.

Understanding objects is one of the toughest parts. Seeing a mug is not the same as knowing it holds coffee or that it should stay upright.

In the past, robots relied on large databases of common-sense knowledge. Now researchers are working to add generative AI models. These systems aim to help the robot adapt its behavior to what is happening in the moment.

Rising demand for helpers

Right now, ADAM is an experimental platform. It costs between about $94,000 and $119,000 (80,000–100,000 euros). That price keeps it in research labs.

Still, the team believes the technology is mature enough that, within 10 to 15 years, similar robots could appear in homes at a much lower cost.

The timing matters. Many countries face rising numbers of older adults and fewer caregivers. Families feel the strain. Care facilities struggle to keep up. Technology will not replace human care, but it can fill gaps.

“Every day there are more elderly people in our society and fewer people who can care for them, so these types of technological solutions are going to become increasingly necessary,” said Barber.

“In this context, assistive robots are emerging as a key tool to improve the quality of life and autonomy of people.”

Originally written by: Rodielon Putol

Source: Earth.com

Published on: 19 February 2026

Link to original article: Robots learn to coordinate both arms for safer home assistance