Opinion · March 2026

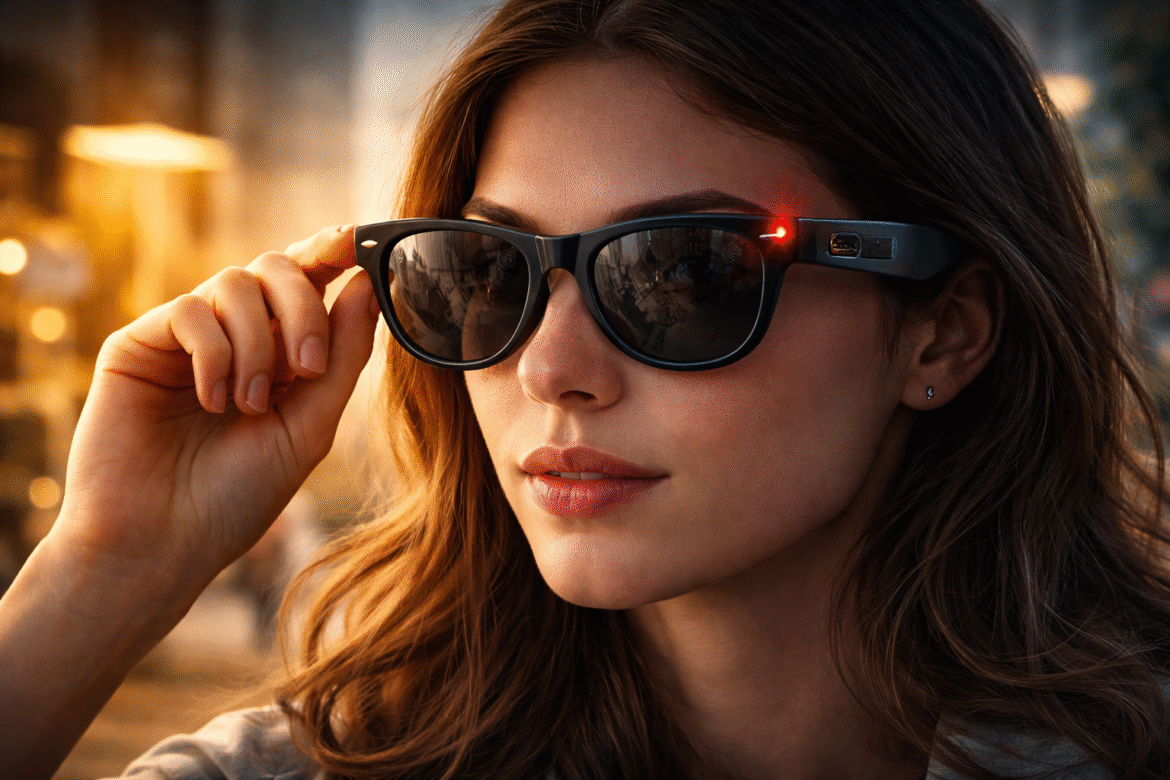

Let’s start with what did not make the Meta Ray-Ban launch deck. The glasses record. They listen. They upload. And somewhere in that pipeline, contractors in Nairobi are reviewing footage of people undressing.

Not a glitch. Not a hack. The product working as designed.

Footage gets routed through human review teams who label it so Meta’s AI can improve. Some of that footage contains moments that were never meant to be seen by anyone. Bathroom trips. Intimate encounters. Captured by a device sitting on someone’s face, doing exactly what it was built to do.

Here is why this is a different problem from anything before it:

- Your phone sits in your pocket. You decide when it comes out.

- Glasses sit on your face, pointed at everything, always.

- Bystanders have no idea they are being recorded.

- The camera cannot tell a busy street from someone’s bedroom.

- The pipeline does not care either way.

Human annotation is standard in AI development. Someone has to label the data, that is not the scandal. The scandal is that the device has no off switch, no contextual awareness, no ability to separate public from private. It captures everything and hands it downstream. What happens next depends on governance frameworks built for a world where data collection had edges. This one does not.

What that actually looks like in practice:

- A product ships.

- Data flows.

- Sensitive footage enters the pipeline.

- Someone handles it under terms never designed for this moment.

- Nobody in the original product meeting planned for it. But they built the system that made it inevitable.

Companies keep treating data governance like a legal checkbox to tick before launch. It is not. It is an operational question that runs through every layer of how data moves, who touches it, under what conditions, and what happens when something sensitive gets through. And something sensitive will always get through. That is not pessimism, that is what happens when you build a camera that never stops looking.

Written policies do not un-see footage. A privacy framework living in a PDF does not protect the person who had no idea they were being recorded.

The smart glasses market will grow. More devices will ship. More data will move through more pipelines touching more hands across more continents. The industry knows this. The question is whether accountability gets built in before the next story breaks, or gets explained away after.

Based on current form, we already know which way they are betting.

Who is actually responsible when AI hardware captures something it should never have seen? The company that built the device? The contractor who reviewed it? The person who wore the glasses into a private space? The governance framework that covered none of it? Stop scrolling and say what you think.