Researchers report that a shared AI software stack is now being tested across industrial robots and surgical systems, marking one of the first coordinated deployments of physical AI at scale.

That shift brings machine intelligence out of controlled lab environments and into real-world settings where performance must hold under unpredictable conditions.

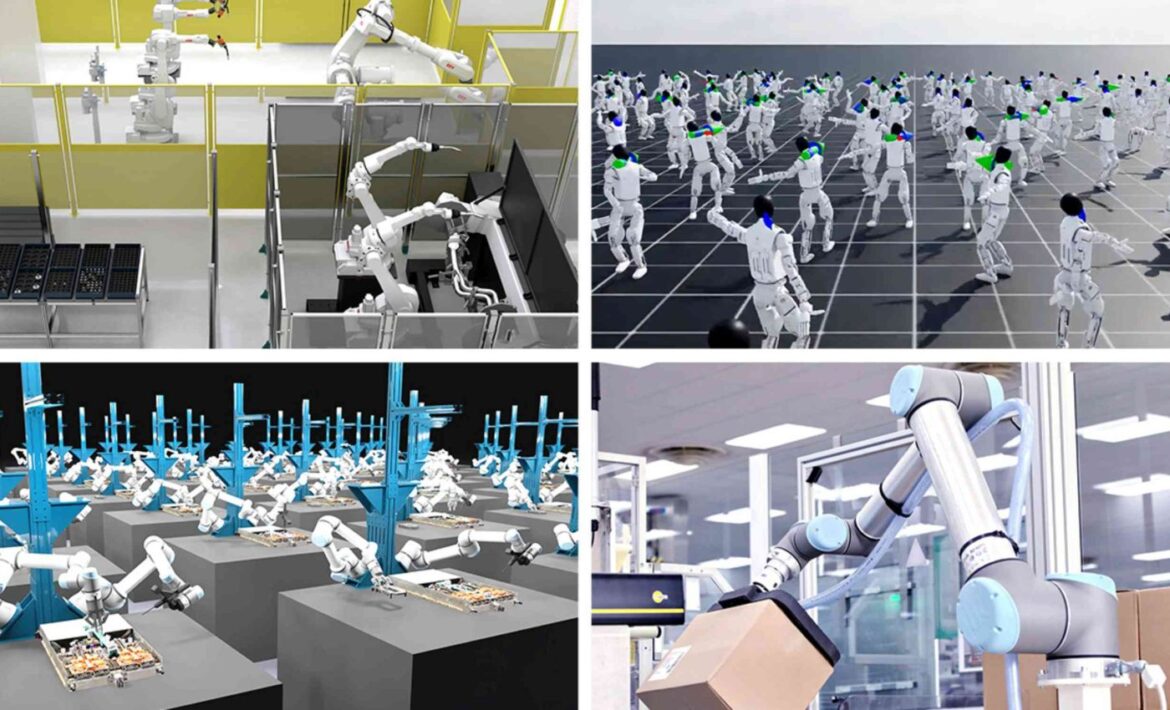

AI robots in factories

Inside detailed virtual replicas of factory floors, robotic systems now rehearse full assembly processes before any physical deployment.

Engineers at NVIDIA have connected advanced simulation software with industrial robots to observe how these systems perform under realistic production conditions.

Across these environments, the same robots can practice complex sequences repeatedly, revealing errors and limitations before they appear in live operations.

That reliance on simulation sets a boundary, because success in controlled digital conditions does not guarantee reliable performance once machines face real-world variability.

Brains beyond scripts

Instead of teaching each arm one motion, developers are training broader control software that can handle new jobs with less retraining.

Some systems now generate predicted physical scenarios in advance, allowing robots to practice tasks before encountering them in real environments.

Developers are testing whether this simulated training still holds up when robots face unpredictable, real-world conditions.

That matters because flexible robots fail when surroundings change, and extra practice data can fill many gaps before deployment.

Humanoid robots and AI

Humanoid builders want robots that can open doors, move bins and handle cables without a full software rewrite every time.

New training systems now scale robot learning across larger environments while modeling how multiple physical forces interact during movement.

Early versions of these systems show improved success when robots attempt unfamiliar tasks in new settings.

Even with these improvements, robots still require fast onboard computing once they leave simulated environments.

AI robots and hospitals

Hospitals are joining the same push, but here the bottleneck is not speed alone, it is safety, approval and trust.

New AI-driven systems are also being tested in surgical robotics to prepare machines for use in clinical settings.

Because surgical robots operate around fragile tissue, developers train them in simulation before clinical teams rely on them.

That raises the bar for physical AI, because a bad warehouse pick slows shipments, while a bad surgical move can hurt patients.

Smaller shops matter

Big manufacturers are not the only target, because smaller plants often lack the engineers needed to reprogram robots for each new part.

Some platforms can now train robots to handle new parts in minutes, reducing the need for manual reprogramming.

Shared control software is being applied across different robot types to reuse the same learned behaviors.

If that approach holds outside demos, smaller firms could buy capability instead of custom code, and automation would spread faster.

Warehouses test scale

Warehouse fleets show why scale matters, since one robot can look smart while a crowded aisle turns the whole system clumsy.

Large virtual warehouse environments are now used to train autonomous machines to navigate space, timing, and coordination at scale.

Those rehearsals matter because forklifts share aisles with people, pallets and delays that wreck neat lab behavior.

Logistics may become the clearest early test, since the work repeats constantly and mistakes show up in throughput fast.

Clouds feed robots

Training these systems now depends on far more examples than most robot teams can gather on their own floors.

Cloud providers are tying NVIDIA tools to synthetic data, computer-made examples for training rare tasks, so failures can be trained on at scale.

That lets developers generate blocked views, awkward part positions and other edge cases without waiting months for real footage.

More compute does not guarantee better behavior, but it can expose weak robot skills before workers or patients pay the price.

Openness widens access

NVIDIA is also widening the talent pool instead of keeping robotics tools inside a few elite labs.

Its open tools now connect millions of robotics developers with a much larger community of AI builders, while startup programs support tens of thousands of new entrants.

That kind of access matters because open tools let smaller teams copy, test and improve ideas without huge vendor deals.

Open ecosystems can also spread bad habits quickly, so shared evaluation standards become more important, not less.

AI, robots, and the future

“Physical AI has arrived – every industrial company will become a robotics company,” said Jensen Huang, founder and CEO of NVIDIA.

For now, much of what the company announced still sits in early access, preview status or partnership pilots.

Robot learning fails in ordinary places because lighting changes, objects wear down and people do not move like scripted tests.

Even strong benchmarks, standardized tests for comparing systems, can miss site-specific hazards, leaving uptime and safety as the real verdict.

NVIDIA is trying to become the common operating layer beneath factory arms, warehouse fleets, humanoids and surgical robots, not just a chip supplier.

Success will depend less on flashy robot videos than on repeated safe performance, because physical AI only counts when it survives real work.

Originally written by: Jordan Joseph

Source: Earth.com

Published on: 1 April 2026

Link to original article: NVIDIA and robotics companies are working to deploy AI-powered robots in factories and hospitals