The US military reportedly used Anthropic’s Claude when it launched its first attack on Iran almost one week ago, shining a spotlight on the use of large-language artificial intelligence models in modern warfare.

The new era of artificial intelligence permits military decisions to be made far more quickly, providing an edge to those utilising it in war.

But what are its capabilities, and what are the risks of automating warfare that has real human consequences? ITV News explains

Artificial intelligence is becoming more sophisticated

Artificial Intelligence (AI) has been used in the military for decades. During the Cold War, the US Navy used forms of AI to process vast amounts of surveillance data.

By the 1990’s, they were using AI to schedule the transportation of supplies or personnel, saving millions of dollars in the process.

But over the years, AI has become increasingly more sophisticated, with Israel reportedly using it in Gaza as to select potential buildings and people to strike, according to intelligence sources.

It has also become incredibly useful in military defence because of its ability to identify missiles and forming a response in a very quick amount of time.

Last year, Ukraine deployed a fully AI-controlled turret, designed to detect and shoot down Russian drones without any human involvement.

How has the US been using AI before the Iran war?

The US Department of Defence struck deals with several major AI firms in July last year, but Anthropic, which creates a system called Claude, was the only model permitted to be deployed on the military’s classified systems.

The company said it had been “extensively deployed” across the department and national security agencies, for “intelligence analysis, modelling and simulation, operational planning, cyber operations, and more”.

A boy tries to climb on an unexploded Iranian projectile that landed in an open field in the outskirts of Qamishli, eastern SyriaCredit: AP

Claude was reportedly also used in the military operation in Venezuela, which saw US forces capture and extract President Nicolás Maduro from the country.

Operational details of how it was precisely used aren’t available, but David Galbreath, Professor of War and Technology at the University of Bath, told ITV News it was likely used for intelligence.

“You would have had lots of drones and other types of aircraft hoovering up data. How people are moving throughout the city, all the telecoms that are happening, and what traffic is like,” he said.

“You’re accumulating a large amount of data. And that’s where those large language models are particularly useful in being able to collect all that data and then trying to then show intelligence officers some of the key patterns that emerge out of that.”

The intelligence officers would then use human judgment to decipher how those patterns could be useful for operations.

Trump delivered an eight minute video statement on Truth Social saying the US has begun “major combat operations” in Iran.Credit: Truth Social/@realDonaldTrump

The Trump administration and Claude’s relationship breakdown

The day before the US and Israel began striking Iranian targets, Anthropic released a statement reiterating its ethical stance that it should and would not be used for domestic mass surveillance or fully automated weapons.

Regarding automated weapons, the company said AI systems are “simply not reliable enough” to make critical judgements, and “soldiers and civilians could be put at risk”.

The Defence Department had previously announced it would only award contracts to companies that allow “any lawful use” of their models.

Anthropic said this would move past its ethical hardlines, and it could not “in good conscience accede to their request”.

Before the attack began, Trump ordered all government agencies to immediately stop using Claude, designating Anthropic a supply chain risk.

The president said the Department of Defence would have six months to phase out the “radical-left” company’s military application.

Hours later, OpenAI signed a deal with the Pentagon, reportedly with similar restrictions over autonomous weapons systems and domestic mass surveillance.

Credit: AP

How is AI being utilised in the Iran War?

The US military has reportedly been using Claude in Iran for months leading up to the attack.

Claude’s ability to gather data from drones and aeroplanes over Iran and summarise it would allow decisions to be made in a fraction of the time it would take in previous warfare.

“We know that these targeting systems are essentially relying on this sort of data gathering,” Professor Galbreath said.

“You can ask or prompt a large language model to do a search of a map that would allow you to pinpoint different areas, for instance, anything that has the Islamic Revolutionary Guard or any building that it holds.”

“You can make sure that actually not only do you have them all on file, but actually as they become available and as your targeting options become available, you have that information, and you can go ahead and actually push that button or pull that trigger whenever that arises.”

The risks of using AI in warfare

Risks can come from what prompts an AI model is based on.

If a prompt is skewed one way, the outcome can be too, as the AI looks to follow instructions, which we’ve seen with some of Elon Musk’s chatbox controversies, where the chatbot started making antisemitic comments in response to user queries.

Other risks come from an automated system being unable to decipher the nuance of a dangerous and complex situation when tasked with summarising it.

“The biggest challenge, I think, is just understanding how artificial intelligence affects the way that we think about combat”, Professor Galbreath said.

Before artificial intelligence, agents would conduct reconnaissance on the ground, hoovering up as much information as they could get and reporting it back, where humans would try to make sense of it.

“Militaries are really trying to think about artificial intelligence as being something that probably gives the breadth and depth of information that they probably always wanted.

“But there’s always a danger that sometimes your intelligence will give you either faulty reports or reports that actually turn out to be not very operationally valuable.”

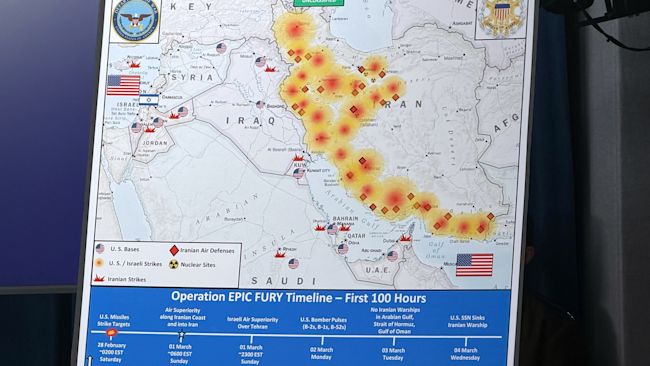

A Dept. of Defense map entitled, Operation EPIC FURY.Credit: AP

Does AI make warfare more deadly?

Professor Galbreath said the increasing use of AI in military forces has not necessarily “made a difference in terms of the overall lethality of the war.”

“It’s already quite lethal, but it hasn’t become more lethal because of AI,” he said.

AI, however, does provide an edge to those who use it and could tip wars in favour of those whose artificial intelligence moves the quickest and is the most accurate.

You can target more widely than has ever been possible, and gather information even as you are targeting.

Galbreath adds that perhaps it’s the lack of absolute control over artificial intelligence or understanding of its full capabilities that causes so much fear about its deployment.

“I think that there is just a general feeling that artificial intelligence is something that we don’t have full control over. We just think we have full control over.

“I think as long as that kind of idea continues to go on, of thinking of it as an alternative intelligence, the fear will be that AI is doing something to war that actually wasn’t being done already.”

Originally written by: Sarah Locke

Source: itv News

Published on: 5 March 2026

Link to original article: How is AI shaping the conflict in Iran?